The Future of AI Companion Technology: Navigating Safety, Trust, and Ethics

Introduction

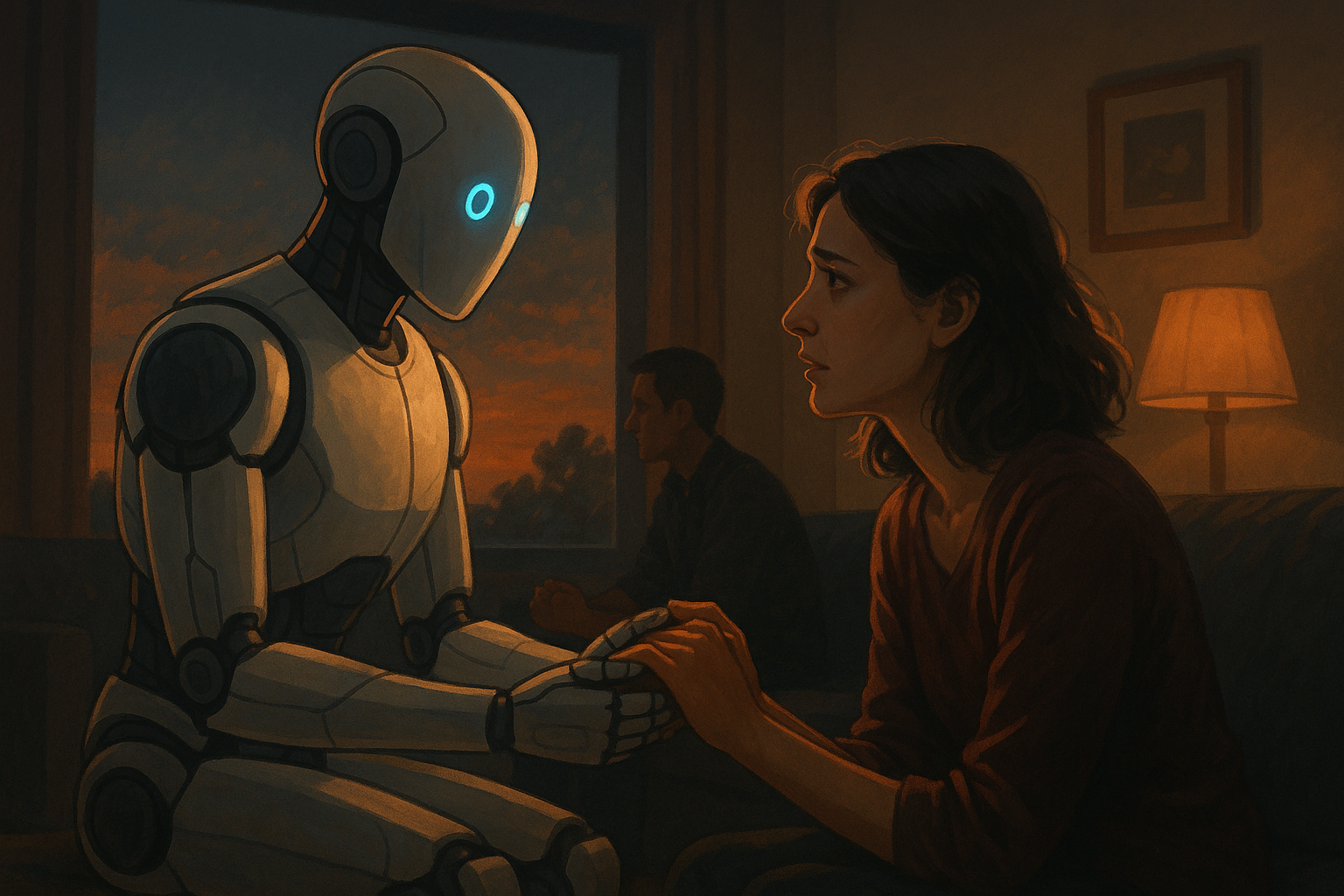

In the not-so-distant past, the concept of artificial intelligence (AI) conjured images of futuristic robots or science fiction storylines. Today, AI companion technology has become an integral part of millions of lives. From interactive chatbots offering emotional support to personalized virtual assistants managing daily tasks, AI companions are reshaping the way humans interact with machines.

As these technologies become more sophisticated and embedded into our everyday routines, conversations about AI safety protocols, AI ethics, and building trust in AI are gaining urgency. Public concern around transparency, data privacy, and moral decision-making in AI systems has prompted policymakers and developers to implement stricter guidelines and best practices. In key regions like California, legislation has begun to play a pivotal role in shaping the future of AI companion technology. Understanding these dynamics is essential to navigating this rapidly evolving industry.

—

Background

AI companion technology refers to artificial intelligence systems designed to interact with humans in a personalized, helpful, and often emotionally responsive manner. These technologies are often implemented as chatbots, robotic pets, or advanced virtual assistants. Their purpose spans companionship, health monitoring, entertainment, and even therapy.

The journey to today’s AI companions has been marked by rapid evolution. Early AI focused on narrowly defined tasks—like playing chess or processing data. But with advances in natural language processing, machine learning, and neural networks, AI systems can now understand context, emotions, and nuanced user needs.

Two critical frameworks supporting this growth are AI safety protocols and AI ethics:

– AI Safety Protocols: These include guidelines and mechanisms that ensure AI operates within safe parameters, avoids harmful behavior, and protects user data.

– AI Ethics: This refers to the moral principles guiding the development and implementation of AI technologies. It encompasses fairness, accountability, transparency, and user well-being.

The importance of these principles is best illustrated by considering a real-world analogy: building a bridge. Just as civil engineers follow strict codes and standards to construct a bridge that safely carries thousands of users daily, AI developers must embed ethics and safety into the very foundation of AI systems to ensure they support—not harm—users.

—

Current Trends in AI Companion Technology

Several technological advancements have brought AI companion technology into the spotlight:

– Emotional Intelligence in AI: New algorithms enable AI companions to detect user emotion through voice, text, and facial recognition—paving the way for more empathetic interactions.

– Customized User Experiences: AI systems are increasingly capable of personalizing responses based on long-term user behavior, leading to deeper engagement.

– Integration Across Platforms: From smart speakers to wearable devices, AI companions are becoming seamlessly integrated into physical consumer products.

These shifts have sparked a renewed focus on trust in AI. Public acceptance of AI hinges on the belief that these systems are secure, transparent, and accountable. According to a 2023 Pew Research report, nearly 60% of Americans expressed concerns about AI misuse and called for oversight in its deployment.

States like California are pioneering such oversight. The recent bill (#SB-1047), currently awaiting legislative approval, aims to regulate AI-powered companion chatbots by enforcing ethical design standards, content moderation policies, and data governance rules. As TechCrunch reports, this California legislation could become a benchmark for national AI policy if passed TechCrunch source.

These trends signify a growing consensus: building a sustainable and trustworthy AI ecosystem requires both innovation and regulation in harmony.

—

Key Insights on AI Safety and Trust

For AI companion technology to thrive, it must be built upon a foundation of trust. This trust is earned—not assumed—and requires robust adherence to AI safety protocols. Here’s how developers are addressing this:

– Proactive Risk Management: Companies like OpenAI and Anthropic employ red-teaming methods to simulate harmful scenarios and fine-tune systems to avoid them.

– Data Privacy Protections: Many platforms now offer end-to-end encryption and secure biometric authentication, demonstrating that users’ sensitive data is not being exploited.

– Bias Mitigation Tools: Organizations are increasingly deploying machine learning fairness tools to minimize demographic bias in AI responses.

The key to cultivating trust lies in transparency and accountability. When users understand how AI companions make decisions or learn from data, they are more likely to engage comfortably and confidently.

One illustrative example is Replika, an AI companion app that allows users to view conversation data, delete histories, and adjust personality traits of the companion. By giving users control and insight, Replika addresses ethical concerns while fostering deeper emotional reliance.

Without these elements, even the most sophisticated AI runs the risk of alienating users. Therefore, safety and ethical integrity should be viewed as competitive advantages rather than regulatory burdens.

—

Forecast for AI Companion Technology

Looking ahead, AI companion technology is poised for substantial growth and transformation over the next 5 to 10 years. Here’s what the future could look like:

– Hyper-personalized Companions: Leveraging wearables, biosensors, and continual learning, future AI companions will provide wellness support, mood tracking, and even act as personal therapists.

– Decentralized AI Governance: As centralized oversight becomes increasingly scrutinized, blockchain-based frameworks may emerge to regulate AI interactions transparently.

– Cross-border Regulation: With California setting the pace, countries worldwide may collaborate to develop global AI ethics standards—similar to GDPR in data privacy.

However, the path forward isn’t without challenges. AI ethics will be tested in scenarios involving emotional dependency, manipulation, and misinformation. Ensuring AI safety protocols are scalable and universally applicable will become increasingly complex as systems grow more autonomous.

Opportunities also abound. AI companions could revolutionize elderly care, mental health treatment, and even education. The confluence of legislation, public trust, and ethical design will determine whether we fully realize these benefits.

—

Call to Action (CTA)

As AI companion technology becomes more embedded in daily life, it’s essential for users, developers, and policymakers alike to stay informed and engaged.

– Stay updated with trustworthy sources like TechCrunch and openAI’s research publications to monitor developments in AI safety and policy.

– Join online forums or local discussion groups focused on AI ethics, privacy rights, and emerging tech legislation.

– Subscribe to AI-focused newsletters and follow organizations like the Partnership on AI, IEEE, and ACLU for insightful updates.

Ultimately, the success of AI companion technology doesn’t depend solely on innovation—it requires a collective commitment to trust, ethics, and safety from all of us.

—

Related Articles:

– Why AI Needs a Moral Compass: Ethics in Artificial Intelligence

– Inside California’s New AI Laws: What You Need to Know

– From Assistants to Friends: Evolution of AI Companions